Many of us are familiar with the term bias. It’s one of those concepts that has made its way into the common parlance, its meaning well-understood as factors that sway judgment in a particular direction. The presence and pitfalls of biased decision making have long been on my radar, which I discussed in my podcast conversation with Carol Tavris and Elliot Aronson. In addition to bias, it turns out there is another, equally significant reason for errors in judgment: noise. Both bias and noise are fundamental concepts which must be understood and accounted for in order to successfully evaluate science and make the most accurate decisions possible.

Want to learn more? Check out our article, why we’re not wired to think scientifically (and what can be done about it), and our interview with John Ioannidis, M.D., D.Sc. on why most biomedical research is flawed, and how to improve it. Subscribe to our free weekly newsletter so you never miss an article!

What is Noise?

Noise is the unwanted variety in a set of responses, or judgments about something. I say unwanted because the variability, in this case, is not beneficial but rather represents deviation, or error. A noisy system is one that has a large variation in decisions pertaining to a given topic. For example, if a patient consults with four doctors and they all give a different stage of cancer diagnosis, the determinations are collectively undesirably noisy.

How Bias and Noise Work Together

This essay, written by Daniel Kahneman, Olivier Sibony, and Cass Sunstein, discusses how bias and noise are contextualized together. Importantly, bias and noise exist independently of one another but are both always present to some degree in human decision making. To illustrate bias and noise together, the essay provides a useful example: a scale that gives an average reading that is too high or too low is biased. If the scale gives different readings in quick succession, it is noisy.

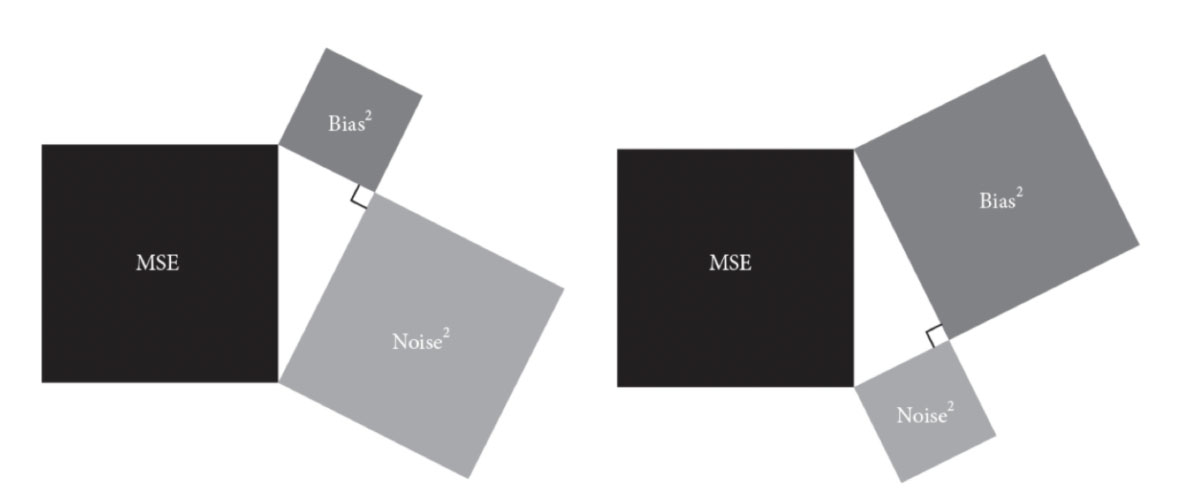

In their recently-published book on the same topic, the essay authors illustrate the significance of noise’s contribution to overall error (also called the mean squared error, or MSE) with the figure below, which shows how MSE equals the sum of bias squared and noise squared (Figure). In the two visual scenarios below, there is more noise than bias in one instance (left) and in another instance there is more bias than noise (right). In both, MSE remains the same. The point is that while bias is perhaps more commonly accounted for in the decision-making process, reducing and preventing noise deserves the same emphasis. Ultimately, the aim is to improve accuracy by reducing the unwanted variability (noise) and average error (bias) in the decision-making process.

How to Identify Noise to Improve Decision Making

In their book, the authors delineate a disciplined process for identifying and preventing noise in order to improve decision-making accuracy. The first step is to undergo a “noise audit” to assess the degree of noise in the system. This audit involves evaluating a set of judgments and asking “how much variation is there between independent judgments?” The second step in the process addresses ways to prevent noise by employing procedures called “decision hygiene” practices. The goal is to produce an independent, fact-based evaluative judgement. Some suggestions to reduce noise include aggregating and averaging the independent assessments and imposing structure for assessments. The authors also mention that absolute scales have more noise than relative scales. As a more extreme solution to reduce noise, human decision-making can sometimes be removed altogether and replaced with algorithms. But of course, using rules to replace human judgment has the potential to introduce its own systematic bias (not to mention that a person has to program the machines).

Importantly, noise—understood as the variability in a set of judgments—is not always an undesired phenomenon. Take for instance the different approaches to treating anything from a headache to a torn ligament. There is not always a single “correct” approach to medical care. Further, different approaches can be, in fact, desirable (which is why I personally try to construct teams of sub-specialists to consult on a single case). Even in an organization in which judgements and decisions are made with a singular voice (and thus, less noise is desirable), individual opinions are still important. There is also value in understanding the reasons for variation between judgments in an organization, which can then inform strategies for increasing accuracy within the larger system.

After reading about noise, I realized that many of the most insightful researchers and analysts I know account informally for noise and bias as sources of error in the process of analyzing scientific literature to form their own opinions on a particular question. Evaluating the quality of evidence on a given topic involves collecting and aggregating independent studies to analyze, and without fail, the set of publications will feature varying degrees of noise. To reconcile noise in the data, the aggregation process accounts for the independent nuances between studies before the collection is reviewed together. Close attention is given to the differences between individual studies, which could be sources of bias. Only after adjusting for bias and noise in these ways will a good analyst look for trends in the best available data and derive a judgement or point of view.

To make effective judgements, we not only have to have information, but we also need a system and process in place for navigating bias and noise, respectively. The good news is that there are clear procedures to account for bias and, now with a little help from Kahneman, Sibony, and Sunstein, for noise too.

– Peter

Of the three books in the trilogy, Thinking, Fast and Slow; Nudge: The final Edition and Noise, which would you suggest reading first if all three are purchased at the same time?

i have read mixed reviews about noise, but this essay makes it seem worth reading – do you think it is worth reading?

peter, your mails on bio statistics and research are always out of the world.

this one on noise is no exception.

DR Bala