I wrote this post almost six years ago (March 21, 2012), but it’s the gift that keeps on giving.

These days, I feel a lot like Bill Murray in Groundhog Day, where at least a few times a year, my inbox is stuffed with concerned individuals forwarding me a paper (or, much more the case, a story about a paper) implying red meat is going to send me to an early grave. The irony is that being stuck in some sort of sadistic red-meat time loop probably will do the trick. But at least I have meditation to help with that.

To be fair, the red-meat-studies are not the only culprit. As I mentioned in a Nerd Safari on epidemiology, John Ioannidis and Jonathan Schoenfeld picked 50 ingredients at random out of a cookbook and determined if each was associated with cancer. They found that at least one study was identified as showing an association for an increase or decrease in cancer for 40 out of the 50 ingredients. (The 10 that didn’t make the list were more “obscure,” as the authors put it: bay leaf, cloves, thyme, vanilla, hickory, molasses, almonds, baking soda, ginger, and terrapin. Thank heavens I can still have my terrapin.)

It’s probably not unfair to say that I put together this post as a coping mechanism. Fight fire with fire. You want a time loop? You may see the following post make its way to the front of the queue several times a year. While it’s of course not the best tack for me to close my eyes and block my ears to the latest article that forces a visceral reaction, it’s important to put things in context first.

This time around, I’m posting not because a new study just came out on red meat and mortality (although I haven’t checked my email in the past five minutes), but because we’re doing a series on this very topic of observational epidemiology.

Studying Studies: Part I – relative risk vs. absolute risk

Studying Studies: Part II – observational epidemiology

Studying Studies: Part III – the motivation for observational studies

I must admit, re-reading this post for the first time, I thought to myself, ‘Wow, Peter. Chill out…you really wrote that?’ Kinda like when I look at a picture of me from the 90’s. Dude, you wore that?

—P.A., January 2018

§

“For the greatest enemy of truth is very often not the lie—deliberate, contrived and dishonest—but the myth—persistent, persuasive, and unrealistic. Too often we hold fast to the clichés of our forebears. We subject all facts to a prefabricated set of interpretations. We enjoy the comfort of opinion without the discomfort of thought.”

– John F. Kennedy, Yale University commencement address (June 11, 1962)

I’m going to devote this post to a discussion on what I like to call the Scientific Weapon of Mass Destruction: observational epidemiology, at least for public health policy

I had always planned to write about this most important topic soon enough, but the recent study out of Harvard’s School of Public Health generated more than enough stories like this one such that I figured it was worth putting some of my other ideas on the back-burner, at least for a week. If you’ve been reading this blog at all you’ve hopefully figured out that I’m not writing it to get rich. What I’m trying to do is help people understand how to think about what they eat and why. I have my own ideas, shared by some, of what is “good” and what is “bad,” and you’ve probably noticed that I don’t eat like most people.

However, that’s not the real point I want to make. I want to help you become thinkers rather than followers, at least on the topic of health sciences. And that includes not being mindless followers of me or my ideas, of course. Being a critical thinker doesn’t mean you reject everything out there for the sake of being contrarian. It means you question everything out there. I failed to do this in medical school and residency. I mindlessly accepted what I was taught about nutrition without ever looking at the data myself.

Too often we cling to nice stories because they make us feel good, but we don’t ask the hard questions. You’ve had great success improving your health on a vegan diet? No animals have died at your expense. Great! But, why do you think it is you’ve improved your health on this diet? Is it because you stopped eating animal products? Perhaps. What else did you stop eating? How can we figure this out? If we don’t ask these questions, we end up making incorrect linkages between cause and effect. This is the sine qua non of bad science.

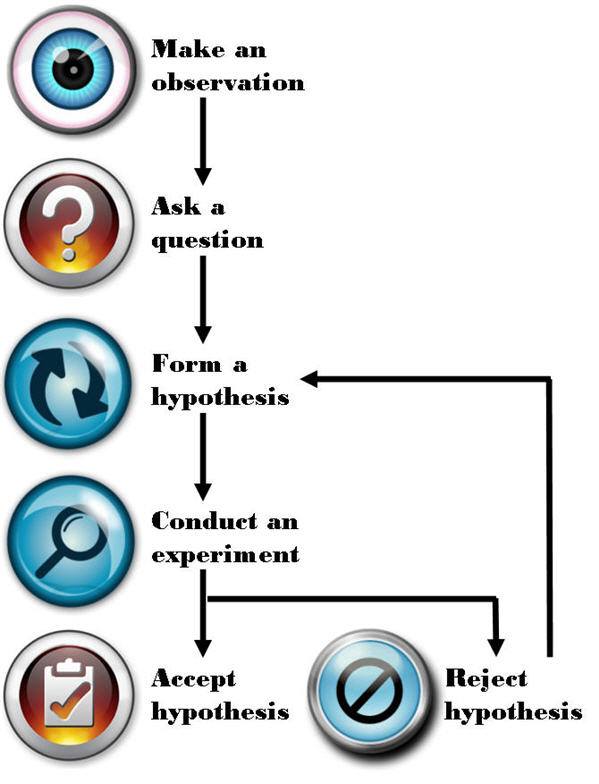

Most disciplines of science—such as physics, chemistry, and biology—use something called the Scientific Method to answer questions. A simple figure of this approach is shown below:

The figure is pretty self-explanatory, so let me get to the part that observational epidemiology inherently omits: “Conduct an experiment.” There is no shortage of observations, questions, or hypotheses in the world of epidemiology and public health—so we’re doing well on that front. It’s that pesky experiment part we’re getting hung up on. Without doing controlled experiments it is not possible to distinguish the relationship between cause and effect.

What is an experiment?

There are several types of experiments and they are not all equally effective at determining the cause and effect relationship. Climate scientists and social economists (like one of my favorites, Steven Levitt), for example, often carry out natural experiments. Why? Because the “laboratory” they study can’t actually be manipulated in a controlled setting. For example, when Levitt and his colleagues tried to figure out if swimming pools or guns were more dangerous to children—i.e., Was a child more likely to drown in a house with a swimming pool or be shot by a gun in a home with a gun?—they could only look at historical, or observational, data. They could not design an experiment to study this question prospectively and in a controlled manner.

How would one design such an experiment? In a “dream” world you would find, say, 100,000 families and you would split them into two groups—group 1 and group 2. Group 1 and 2 would be statistically identical in every way once divided. Because of the size of the population, any differences between them would cancel out (e.g., socioeconomic status, number of kids, parenting styles, geography). The 50,000 group 1 families would then have a swimming pool installed in their backyard and the 50,000 group 2 families would be given a gun to keep in their house.

For a period of time, say 5 years, the scientists would observe the differences in child death rates from these two causes (accidental drownings and gunshot wounds). At the conclusion, provided the study was powered appropriately, the scientists would know which was more hazardous to the life of a child, a home swimming pool or a home gun.

Unfortunately, questions like this (and the other questions studied by folks like Levitt) can’t be studied in a controlled way. Such studies are just impractical, if not impossible, to do.

Similarly, to rigorously study the anthropogenic CO2 – climate change hypothesis, for example, we would need another planet earth with the same number of humans, cows, lakes, oceans, and kittens that did NOT burn fossil fuels for 50 years. But, since these scenarios are never going to happen the folks that carry out natural experiments do the best they can to statistically manipulate data to separate as many confounding factors as possible in every effort to identify the relationship between cause and effect.

Enter the holy grail of experiments: the controlled experiment. In a controlled experiment, as the name suggests, the scientists have control over all variables between the groups (typically what we call a “control” group and a “treatment” group). Furthermore, they study subjects prospectively (rather than backward-looking, or retrospectively) while only changing one variable at a time. Even a well-designed experiment, if it changes too many variables (for example), prevents the investigator from making the important link: cause and effect.

Imagine a clinical experiment for patients with colon cancer. One group gets randomized to no treatment (“control group”). The other group gets randomized to a cocktail of 14 different chemotherapy drugs, plus radiation, plus surgery, plus hypnosis treatments, plus daily massages, plus daily ice cream sandwiches, plus daily visits from kittens (“treatment group”). A year later the treatment group has outlived the control group, and therefore the treatment has worked. But how do we know EXACTLY what led to the survival benefit? Was it 3 of the 14 drugs? The surgery? The kittens? We cannot know from this experiment. The only way to know for certain if a treatment works is to isolate it from all other variables and test it in a randomized prospective fashion.

As you can see, even doing a prospective controlled experiment is not enough, like the one above, if you fail to design the trial correctly. Technically, the fictitious experiment I describe above is not “wrong,” unless someone—for example, the scientist who carried out the trial or the newspapers who report on it—misrepresented it.

If the New York Times and CNN reported the following: New study proves that kittens cure cancer! would it be accurate? Not even close. Sadly, most folks would never read the actual study to understand why this bumper-sticker conclusion is categorically false. Sure, it is possible, based on this study, that kittens can cure cancer. But the scientists in this hypothetical study have wasted a lot of time and money if their goal was to determine if kittens could cure cancer. The best thing this study did was to reiterate a hypothesis. Nothing more. In other words, this experiment (even assuming it was done perfectly well from a technical standpoint) learned nothing other than the combination of 20 interventions was better than none because of an experimental design problem.

So what does all of this have to do with eating red meat?

In effect, I’ve already told you everything you need to know. I’m not actually going to spend any time dissecting the actual study published last week [March 12, 2012] that led to the screaming headlines about how red meat eaters are at greater risk of death from all causes (yes, “all causes,” according to this study) because it’s already been done a number of times by others this week alone. Three critical posts on this specific paper can be found here, here, and here.

I can’t suggest strongly enough that you read them all if you really want to understand the countless limitations of this particular study, and why its conclusion should be completely disregarded. If you want bonus points, read the paper first, see if you can understand the failures of it, then check your “answer” against these posts. As silly as this sounds, it’s actually the best way to know if you’ve really internalized what I’m describing.

Now, I know what you might be thinking: Oh, come on Peter, you’re just upset because this study says something completely opposite to what you want to hear.

Not so. In fact, I have the same criticism of similarly conducted studies that “find” conclusions I agree with. For example, on the exact same day the red meat study was published online (March 12, 2012) in the journal Archives of Internal Medicine, the same group of authors from Harvard’s School of Public Health published another paper in the journal Circulation. This second paper reported on the link between sweetened beverage consumption and heart disease, which “showed” that consumption of sugar-sweetened beverages increased the risk of heart disease in men.

I agree that sugar-sweetened beverages increase the risk of heart disease (not just in men, of course, but in women, too) along with a whole host of other diseases like cancer, diabetes, and Alzheimer’s disease. But, the point remains that this study does little to nothing to add to the body of evidence implicating sugar because it was not a controlled experiment.

This problem is actually rampant in nutrition

We’ve got studies “proving” that eating more grains protect men from colon cancer, that light-to-moderate alcohol consumption reduces the risk of stroke in women, and that low levels of polyunsaturated fats, including omega-6 fats, increase the risk of hip fractures in women. Are we to believe these studies? They sure sound authoritative, and the way the press reports on them it’s hard to argue, right?

How are these studies typically done?

Let’s talk nuts and bolts for a moment. I know some of you might already be zoning out with the detail, but if you want to understand why and how you’re being misled, you actually need to “double-click” (i.e., get one layer deeper) a bit. What the researchers do in these studies is follow a cohort of several tens of thousands of people—nurses, health care professionals, AARP members, etcetera—and they ask them what they eat with a food frequency questionnaire (FFQ) that is known to be almost fatally flawed in terms of its ability to accurately acquire data about what people really eat. Next, the researchers correlate disease states, morbidity, and maybe even mortality with food consumption, or at least reported food consumption (which is NOT the same thing). So, the end products are correlations—eating food X is associated with a gain of Y pounds, for example. Or eating red meat three times a week is associated with a 50% increase in the risk of death from falling pianos or heart attacks or cancer.

The catch, of course, is that correlations hold no causal information. Just because two events occur in step does not mean you can conclude one causes the other. Often in these articles you’ll hear people give the obligatory, “correlation doesn’t necessarily imply causality.” But saying that suggests a slight disconnect from the real issue. A more accurate statement is “correlation does not imply causality” or “correlations contain no causal information.”

So what explains the findings of studies like this (and virtually every single one of these studies coming out of massive health databases like Harvard’s)?

For starters, the foods associated with weight gain (or whichever disease they are studying) are also the foods associated with “bad” eating habits in the United States—french fries, sweets, red meat, processed meat, etc. Foods associated with weight loss are those associated with “good” eating habits—fruit, low-fat products, vegetables, etc. But, that’s not because these foods cause weight gain or loss, it’s because they are markers for the people who eat a certain way and live a certain way.

Think about who eats a lot of french fries (or a lot of processed meats). They are people who eat at fast food restaurants regularly (or in the case of processed meats, people who are more likely to be economically disadvantaged). So, eating lots of french fries, hamburgers, or processed meats is generally a marker for people with poor eating habits, which is often the case when people are less economically advantaged and less educated than people who buy their food fresh at the local farmer’s market or at Whole Foods. Furthermore, people eating more french fries and red meat are less health conscious in general (or they wouldn’t be eating french fries and red meat—remember, those of us who do eat red meat regularly are in the slim minority of health-conscious folks). These studies are rife with methodological flaws, and I could devote an entire Ph.D. thesis to this topic alone.

What should we do about this?

I’m guessing most of you—and most physicians and policy makers in the United States for that matter—are not actually browsing the American Journal of Epidemiology (where one can find studies like this all day long). But occasionally, like last week, the New York Times, Wall Street Journal, Washington Post, CBS, ABC, CNN, and everyone else gets wind of a study like the now-famous red meat study and comments in a misleading fashion. Health policy in the United States—and by extension much of the world—is driven by this. It’s not a conspiracy theory, by the way. It’s incompetence. Big difference. Keep Hanlon’s razor in mind—Never attribute to malice that which is adequately explained by stupidity.

This behavior, in my opinion, is unethical and the journalists who report on it (along with the scientists who stand by not correcting them) are doing humanity no favors.

I do not dispute that observational epidemiology has played a role in helping to elucidate “simple” linkages in health sciences (e.g., contaminated water and cholera or the linkage between scrotal cancer and chimney sweeps). However, multifaceted or highly complex pathways (e.g., cancer, heart disease) rarely pan out, unless the disease is virtually unheard of without the implicated cause. A great example of this is the elucidation of the linkage between small-cell lung cancer (SCLC) and smoking—we didn’t need a controlled experiment to link smoking to this particular variant of lung cancer because nothing else has ever been shown to even approach the rate of this type of lung cancer the way smoking has (reported relative risk of SCLC in current smokers of more than 1.5 packs of cigarettes a day was 111.3 and 108.6, respectively—over a 10,000% relative risk increase). As a result of this unique fact, Richard Doll and Austin Bradford Hill were able to design a clever observational analysis to correctly identify the cause and effect linkage between tobacco and lung cancer. But this sort of example is actually the exception and not the rule when it comes to epidemiology.

Whether it’s Ancel Keys’ observations and correlations of saturated fat intake and heart disease in his famous Seven Countries Study, which “proved” saturated fat is harmful or Denis Burkitt’s observation that people in Africa ate more fiber than people in England and had less colon cancer “proving” that eating fiber is the key to preventing colon cancer, virtually all of the nutritional dogma we are exposed to has not actually been scientifically tested. Perhaps the most influential current example of observational epidemiology [circa 2012] is the work of T. Colin Campbell, lead author of The China Study, which claims, “the science is clear” and “the results are unmistakable.” Really? Not if you define science the way scientists do. This doesn’t mean Colin Campbell is wrong (though I wholeheartedly believe he is wrong on about 75% of what he says based on current data). It means he has not done sufficient science to advance the discussion and hypotheses he espouses. If you want to read detailed critiques of this work, please look to Denise Minger and Michael Eades. I can only imagine the contribution to mankind Dr. Campbell could have given had he spent the same amount of time and money doing actual scientific experiments to elucidate the impact of dietary intake and chronic disease. [For example, Campbell would have designed a prospective study following subjects randomized to one of two different types of diets for 10 years: plant-based and animal-based, but with all other factors controlled for.] This is one irony of enormous observational epidemiology studies. Not only are they of little value, in a world of finite resources, they detract from real science being done.

Featured Image credit: Design by K. Pauley (CC BY-SA 2.0)

So what is your opinion on eating meat? Eat more of it? Eat less of it?

At the risk of sounding like a looney, I just want to say…you are my hero!

I love that you encourage every one to use their brains and to actually THINK things through.

After seeing several of your studies I began dramatically increasing my good fats and now I have “cured” my diabeties and my horrific blood sugar swings that tomented and dominated me most of my adult life. Keep up the fantastic work. You are a gem. Song

Shall we stop eating red meat as well?

https://www.pnas.org/content/early/2014/12/25/1417508112.abstract

If you’re a mouse, yes, at least worth considering.

After reading Richard Feinman’s book “The world turned upside down” it doesn’t make sense to me to disregard all observational studies without looking at the strength of the evidence. That smoking makes us 30 times more likely to get lung cancer seems worth paying attention to, even if it’s not a double blind gold standard study. Similarly, gold standard studies that show very small differences in results seem pretty open to question.

Lung cancer (and scrotal cancer and mesothelioma) are very special examples–I’d call them the exceptions, not the rules–that give us great reason to we have cause within the correlation.

Almost two years old, still well-sourced and accurate.

https://www.businessinsider.com/13-nutrition-lies-that-made-the-world-sick-and-fat-2013-10?op=1

Some of those claims are exactly the ones you’ve set out to prove (or disprove) with NuSI, and my impression is that for each of them you are either already convinced based on the plenty available evidence or else you could bet that new evidence will confirm them.

You have scrutinized studies more than most people I know. I wonder in your view which of these you personally have highest confidence (close to 100% sure) and which you’d be more like ‘I would bet so, but studies have not been so strong so far and we do actually need more data’.

At least one caveat I believe you have is on #3 as you expose in the video mentioned in ‘Random finding (plus pi)’ article: that the safety of consumption of SFA seems not to be universal (that is, that not all people physiologically respond to it equally well).

And how does the immoral nature of slaughter and massive environmental degradation fit into your statistical model?

Those are important considerations, Gavin. But it’s important to keep the three macro arguments, the two you raise–ethical and environmental–and the individual health considerations separate. Too often people confuse the arguments. They all matter, but I’m asking–very specifically–about the latter.

And how moral is industrial crop-raising and cramming grain and grain-extracted sugar down our gullets, including, by the way, the gullets of industrially raised animals; how environmentally safe is it from a habitat’s point of view? Morally, we’ve been killing animals for food since time immemorial. Today the main reason to be slaughtering them in such numbers is that they’re raised in such numbers, which in turn is possible, because they – can you guess? – are fed on plentiful, subsidized grain.

What do you think of the World Health Organizations conclusions today that people should eat less red meat because it may cause some forms of cancer? How good was the research in terms of establishing causality? They seem to have established a stronger link between processed meat and cancer. In both cases they don’t know why. It is being widely reported and it is giving the anti-meat people more “evidence” for their claims that we should all eat less meat.

See my FB comment.

Even God says it is ok to eat meat.

“Only whenever your soul craves it you may slaughter, and you must eat meat according to the blessing of Jehovah your God that he has given you, inside all your gates. The unclean one and the clean one may eat it, like the gazelle and like the stag.” Deuteronomy 12:15.

*Your* god says eat meat, at least a subset of available meaty options. Plenty of other gods would disagree.

Suzy, this the worst argument ever. Even worse than observational epidemiology.

Hi Peter! Huge fan of your blog! I have recently read studies about iron and its role in insulin sensitivity & aging and believe iron levels is a missing link in nutrition science, probably explaining why eastern cultures were OK despite eating carbs, potential harm in red meat, why women are healthier than men until menopause etc.

Here’s an interesting quote: “Medical scientist Francesco Facchini has done much work in this area. He found that in patients with insulin resistance – what he calls “carbohydrate intolerance” – therapeutic phlebotomy such that the patients got to “near iron deficiency” caused an approximately 50% improvement in insulin sensitivity.”

P.D. Mangan summarizes the findings of various studies quite well, his series of articles on iron:

https://roguehealthandfitness.com/iron-accelerates-aging/

https://roguehealthandfitness.com/iron-levels/

So red meat contains lots of iron, and a person can reduce iron levels by losing/donating blood. So it seems combining low-carb diet with blood donations could be the answer.

I’ve been meandering through this lit for the past year. I think there is something to it, but I don’t think it’s the full story. Too many counterfactual examples. But it could matter in extreme states.

“Vegan” is an old native american word that translates as “poor hunter”.

If God didn’t mean us to eat animals why did he make them out of meat?

cum hoc ergo propter hoc

A big issue with nutritional science is that you’ll never be able to tease the ‘subject’ out of its own environment. You can easily demonstrate necessity, but never sufficiency, to borrow a causality term. So, although the danger of misinterpreting real science, or the worse danger of inferring stuff from anecdotal evidence, is obvious, we can never be lulled into thinking that reductive approaches will one day crack this nut. They can help however! Complexity forces us to employ other techniques. To make matters worse, our biological evolution is governed by a system, nature, that doesn’t care if we exist. Our will to live and thrive is self-imposed.