John Ioannidis is a physician, scientist, writer, and a Stanford University professor who studies scientific research itself, a process known as meta-research. In this episode, John discusses his staggering finding that the majority of published research is actually incorrect. Using nutritional epidemiology as the poster child for irreproducible findings, John describes at length the factors that play into these false positive results and offers numerous insights into how science can course correct.

Subscribe on: APPLE PODCASTS | RSS | GOOGLE | OVERCAST | STITCHER

We discuss:

- John’s background, and the synergy of mathematics, science, and medicine (2:40);

- Why most published research findings are false (10:00);

- The bending of data to reach ‘statistical significance’, and the how bias impacts results (19:30);

- The problem of power: How over- and under-powered studies lead to false positives (26:00);

- Contrasting nutritional epidemiology with genetics research (31:00);

- How to improve nutritional epidemiology and get more answers on efficacy (38:45);

- How pre-existing beliefs impact science (52:30);

- The antidote to questionable research practices infected with bias and bad incentive structures (1:03:45);

- The different roles of public, private, and philanthropic sectors in funding high-risk research that asks the important questions (1:12:00);

- Case studies demonstrating the challenge of epidemiology and how even the best studies can have major flaws (1:21:30);

- Results of John’s study looking at the seroprevalence of SARS-CoV-2, and the resulting vitriol revealing the challenge of doing science in a hyper-politicized environment (1:31:00);

- John’s excitement about the future (1:47:45); and

- More.

Get Peter’s expertise in your inbox 100% free.

Sign up to receive An Introductory Guide to Longevity by Peter Attia, weekly longevity-focused articles, and new podcast announcements.John’s background, and the synergy of mathematics, science, and medicine [2:40]

Overview

- John considers himself a “scientist in the works”

- “I’m trying to be a scientist. I think that this is not an easy job. It means that you need to reinvent yourself all the time. You need to search for new frontiers, for new questions, for new ways to correct errors and to correct your previous self, in some way.”

- Background in mathematics and he bring mathematics to the study of science

- Born in New York City, but I grew up in Athens

- Both of his parents were physician scientists — he heard their stories of clinical exposure but also saw them working on their research

How did you decide to also pursue something in the biological sciences in parallel, as opposed to staying purely in the natural or philosophical sciences of mathematics?

- Medicine had the attraction of being a profession where you can save lives: “The ability to make a difference for human beings and to save lives, to improve their quality of life, seem to be something that was worthwhile pursuing.”

- But mathematics is critical: “mathematics are the foundation of so many things and they can really transform our approach to questions that, without mathematics, it would be very difficult to make much progress.”

How mathematics, science, and medicine synergize

- John sees mathematics and science and medicine as complementary of each other

- In fact to do something very meaningful, you need all three otherwise you risk “losing the whole”

- Medicine is amazing in terms of its possibilities to help people

- However, the scientific method must be applied if you want to get reliable evidence (see Richard Feynman’s famous explanation of the scientific method)

- You also need quantitative approaches (i.e., mathematics)

“I think that none of them is possible to dispense without really losing the whole, and losing the opportunity to do something that really matters eventually.” —John Ioannidis

John’s studies:

- John won the highest honor that a graduating college student could win in mathematics in Greece at the time

- John finished medical school in Athens at the National University of Athens

- He went to Harvard for residency training

- Then to Tufts Medical Center for training in infectious diseases

- At the same time, he was also doing joint training in healthcare research

People that shaped John’s thinking:

- Professor of Epidemiology at Harvard, Dimitrios Trichopoulos

- In residency training, a great physician scientist in infectious diseases, Bob Moellering, who was the physician-in-chief and a professor of medical research at Harvard— “an amazing personality in terms of his clinical acumen and his approach to patients”

- At the end of residency training (1992), John had a “revelation” after meeting Tom Chalmers and Joseph Lau at Tufts who were the ones advancing the frontiers of evidence-based medicine

“It was a revelation for me because somehow what they were proposing was mixing mathematics’ rigorous methods, evidence, and medicine in one coherent whole. . . [until that point] I was just seeing lots of clinical exposures where there was very little evidence to guide us. There was no data, or very poor data, and a lot of expert based opinion guiding everything that was being done.” —John Ioannidis

Why most published research findings are false [10:00]

John came onto Peter’s radar around 2005 when John published this paper: Why Most Published Research Findings Are False

- The paper suggested that more than half of scientific papers claiming to have found a statistically significant signal were actually just a “false positive”

- Peter was blown away by this paper, but he was “primed” to believe it because his mentor in post-doc training had once told him that ~70% of published papers were never cited again (outside of auto citation) suggesting that most papers are either irrelevant or wrong

John describing his 2005 paper

- The paper used a mathematical model to match empirical data that had accumulated over time to understand the validity of different pieces of research that was being produced

- The impetus for this paper was that many scientists had been disillusioned that when a evidence-based medicine started that we now had a tool to be able to get very reliable evidence for decision making

- But they quickly realized that the vast majority of the results were unreliable and could not be replicated— “It was the rule that we had either unreliable evidence or, perhaps even more commonly, no evidence.”

- The mathematical construct would try to: i) explain what is going on, and ii) predict what might happen if some of the circumstances would change in terms of how we do research

- The first question being explored is the chances of a statistically significant result is indeed a “non-null” effect (or is it just a “red herring”?)

In order to calculate this, you need to take into account:

{end of show notes preview}

Would you like access to extensive show notes and references for this podcast (and more)?

Check out this post to see an example of what the substantial show notes look like. Become a member today to get access.

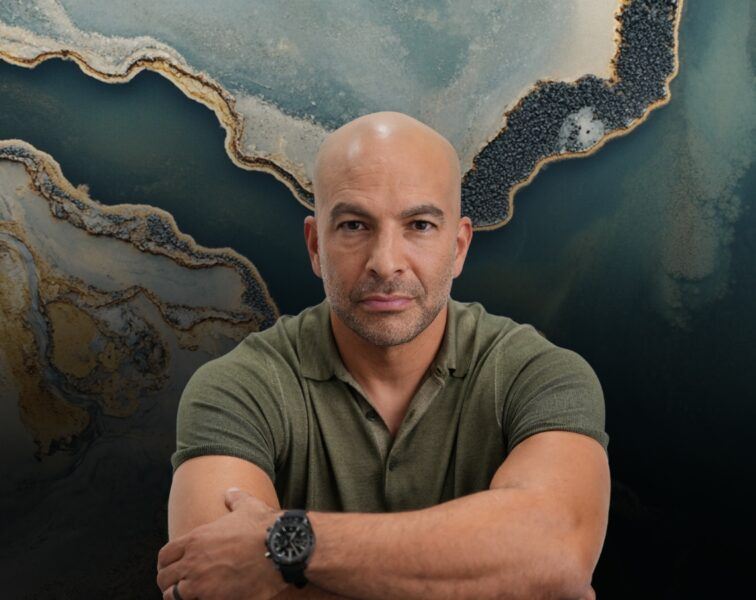

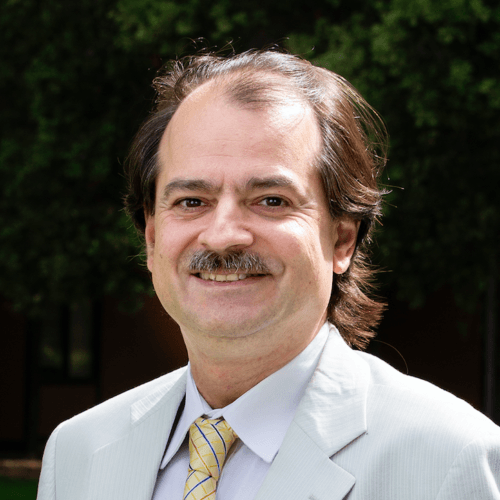

John Ioannidis, M.D., D.Sc.

John Ioannidis is a physician-scientist, writer and Stanford University professor who has made contributions to evidence-based medicine, epidemiology, and clinical research. Ioannidis studies scientific research itself, meta-research primarily in clinical medicine and the social sciences. He’s one of the world’s foremost experts on the credibility of medical research. He’s the Co-director of the Meta-research Innovation Center at Stanford.

Ioannidis’ paper on “Why Most Published Research Findings are False” has been the most-accessed article in the history of Public Library of Science (over 3 million views in 2020).